Good Morning from my Robotics Lab! This is Shadow_8472 and today I have a Virtual Machine (VM) tutorial aimed at Electric Quilt 8 (EQ8) users switching to Linux. Patient zero is my mother’s Linux laptop, but the screenshots are from a second pass I did on my own computer. Let’s get started!

Overview

So, you’re switching to Linux, but that one last holdout still locks you to the Windows ecosystem. You probably shouldn’t be trusted in the BIOS and got help installing Linux from your local nerd who tells ghost stories about Microsoft (they’re all true, especially the lies). The command line scares you. If this is you, stick around to see how I install Windows 7 in a VM (Virtual Machine). More advanced users are welcome too.

For this project, I am assuming you have:

- Enough literacy in Windows to use it for daily tasks.

- A Linux desktop with Internet access (I am working with Fedora 43 and PopOS 22.04)

- Admin (sudo) privileges and repository access – either through an app store or your package manager

- A valid Windows key (I am working with Windows 7 Ultimate)

For best results, ask your tech support to enable virtualization in the BIOS. My blog uses the IEEE citation standard, so when you see numbers in brackets –such as [0]– the Works Cited has a corresponding entry at the end containing a relevant link.

And because it needs to be said, I OFFER THIS GUIDE AS A LAST RESORT WHEN WINE ET. ALL DON’T WORK. RUNNING OBSOLETE SOFTWARE CARRIES SOME INHERENT RISK. I HAVE MADE AN EFFORT TO FIND A GOOD PATH, AND I WILL OFFER ASSISTANCE AS BEST I CAN IF YOU REACH OUT, BUT IN THE END, I AM NOT IN A POSITION TO OFFER A WARRANTY EITHER EXPLICIT OR IMPLICIT. PRACTICE COMMON SENSE AT ALL TIMES WHEN USING UNSUPPORTED OPERATING SYSTEMS.

With that said, I’ll try to make this guide as software version agnostic as reasonably possible.

Project Recap

EQ8 is a quilting program. While some credible reports claim to run it in a compatibility layer (WINE, Proton, CrossOver, etc.), these platforms focus on games and often require tweaks. Someone stubborned Photoshop into WINE (for now, anyway), but many Linux converts switch to native programs without proprietary gotchas. Suffice it to say, I’ve not been so successful with EQ8.

So I switched to VM’s. ReactOS is a free and open source (its code is public knowledge) operating system built to mimic Windows’ structure – just as Linux did with Unix. Last I checked in, a Vista/7 era graphics engine was being added as a long-term milestone. One joke was that development was on track to for Windows 13 refugees. I’m writing in 2026, so if it’s been a year or few and ROS 0.5.0+ is released, it may be worth your time.

Obtaining Windows 7

In any case, I caved and I got my mother Windows 7 last Christmas. If you have an old “retail” license (it can’t say “OEM” as in Original Equipment Manufacturer), use that. Keys on Amazon and eBay are priced like collector’s items and cost even more sealed. Otherwise, the only official valid keys are through Microsoft’s cloud services (no surprise). And then there are “gray market” sites. I found Win7 Ultimate Gamers Outlet listing Win7 Ultimate for $10 (I’m looking at $9.35 writing my 1st draft) [1]. It’s suspiciously cheap, but MajorGeeks.com vouches for them, pointing out how discount key sellers can 1). bulk buy, 2). exploit regional pricing, 3). deal only the keys (no box, paraphernalia, or shipping thereof), and 4). streamline operations for low overhead costs [2].

Now, I have had two good experiences so far, but while editing, I found several claims from 3 years ago about people getting second-hand OEM keys that might get invalidated in a month/year or by changing hardware too much. While Win7 Ultimate OEM was a real product, ShowKeyPlus displayed my keys as Retail.

Remember to save your key somewhere. I saved mine like I would a password –in BitWarden password manager– with the download link from Gamers Outlet, which I had to fiddle with by the time I got around to downloading. For mine, I downloaded from Archive.org. If you have original disks, use those. Otherwise I recommend adding sp1 to your search.

Verifying Your Download With Shasum

If you download your installation media, be aware of dirty downloads preloaded with malware that can affect your VM. You really should check your download’s hash with shasum on your download before proceeding. (Basically A Shasum does some math on a file and returns a number. If you get the expected number, you almost guaranteed have a copy of the original file.) Here is a guide with both graphical and command line instructions: https://itsfoss.com/checksum-tools-guide-linux/ [3]. And if you get lost, you can skip this step for now but be sure to ask your computer guy to run it for you later.

For my download, I verified my sha256sum given with the Archive.org download, but to be on the safe side, I also ran sha1sum and looked for the result in a list of sha1sum results compiled by ifelsee on GitHub: https://gist.github.com/ifelsee/16219e4f927bf75fcb469c16e69b9476 [4].

As an extra step, I attempted to lookup ifelsee’s corresponding Microsoft link in an archive. The Wayback Machine supposedly has a couple dozen captures of the page with the shasum I was after, but I just got a 404 error. I found a similar archive site, but of the three captures, Microsoft was seemingly having errors at the time, and the third was after the goods were gone.

GNOME Boxes

When the big day came, I chose GNOME-Boxes as a VM manager. Learn Linux TV did an excellent video on GNOME-Boxes, so I highly recommend his video [5]. He installs a Linux VM on a Linux host, so not everything lines up, but it’s still useful.

Power Users: QEMU/KVM with Virtual Machine Manager (VMM) does a the same job with more tools. If you install both, either can examine VM’s VMM lists under `QEMU/KVM User session`. In fact, while getting screenshots, I had something go wrong with 7 while it was out of focus and needed the extra power to force it into a shutdown state.

Find and install Gnome-Boxes – either from from your app store or by copy-pasting the following lines into a terminal according to your Linux distribution:

Debian/Ubuntu

sudo apt update

sudo apt upgrade

sudo apt install gnome-boxesFedora/RHEL

sudo dnf install gnome-boxesArch

sudo pacman -Syu

sudo pacman -S gnome-boxesPower Users: Boxes is also on Flatpak and usually better updated.

Installing Windows 7

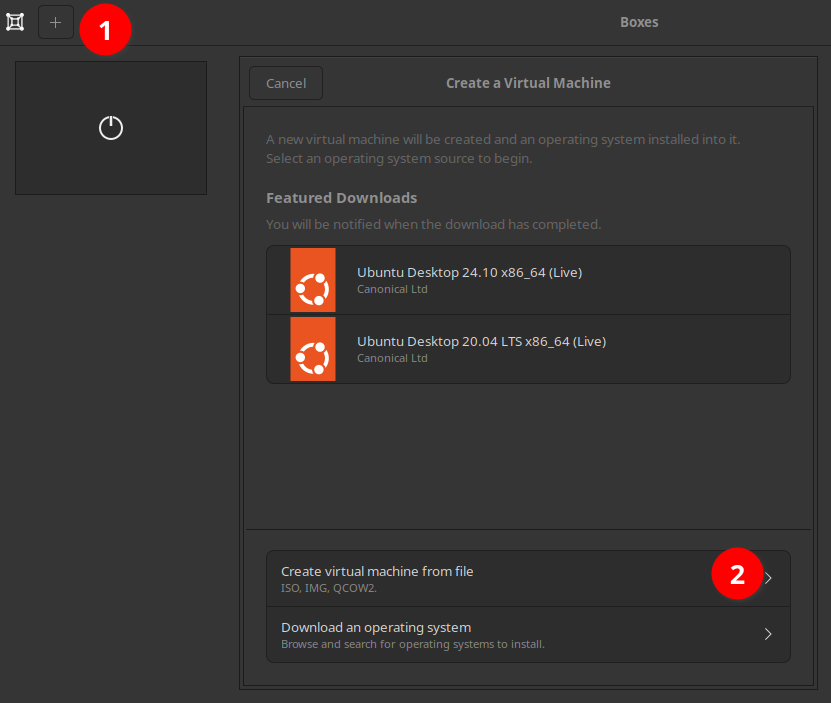

Open Boxes. Create a new VM by clicking the + button in the upper left corner (1 in the screenshot). Create virtual machine from file (2).

Find your Win7 download (I conveniently found mine under recent files).

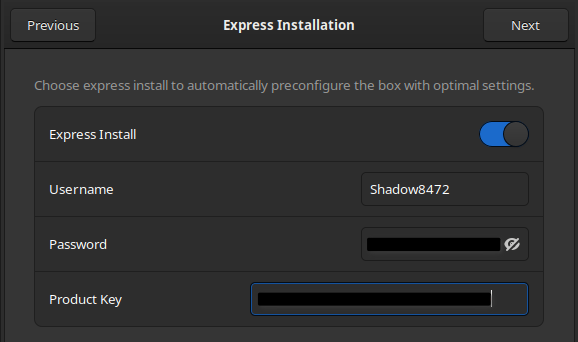

Enter a username, password, and your product key on the express installation page. Alternatively, you can disable Express Install as I did on my first pass, but I won’t be covering that route in as much detail.

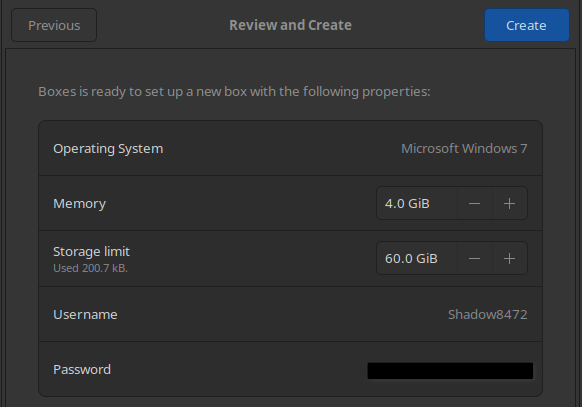

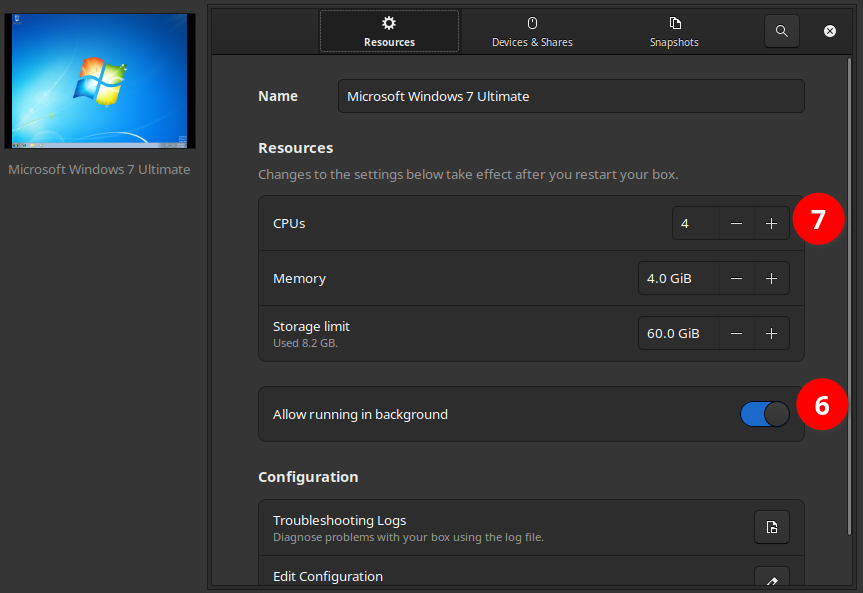

The recommended specs for Windows 7 are 4GB RAM and 60GB disk space – roughly twice that Boxes will give it by default. Edit those figures and hit Create.

Wait for the gear to stop spinning, then open your new VM.

Quality of Life Break

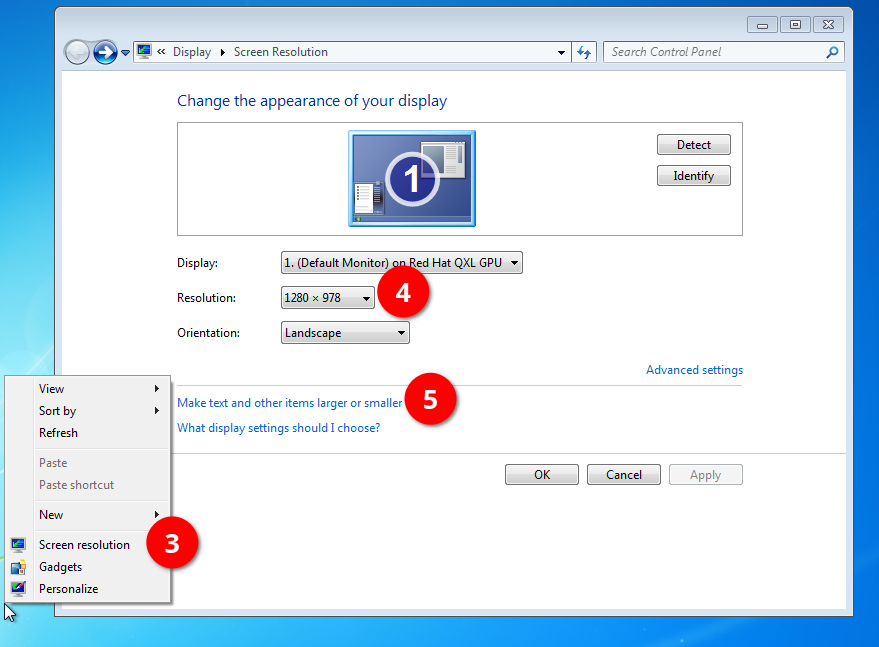

When I did this on my mother’s computer, I squinted through a manual installation on her either 2k or 4k monitor. If you have a black boarder and/or everything appears tiny, bring up a right-click menu on the desktop and click Screen resolution (3). Adjust your Resolution (4) and Make text and other items larger or smaller (5) as desired. Express Install set good defaults for my 7 installation, however.

If “Large” UI elements like window boarders and the Start bar still aren’t big enough for your needs, I found a tutorial on SevenForums.net [6] with instructions on how to set a custom DPI (Dots Per Inch), but have not tested it yet as of writing.

Left Ctrl + Left Alt releases focus from the VM to Linux. This was especially useful when installing my mother’s copy on a single screen, but less so on my dual screen setup. While editing my first draft, I found where Boxes lists its keyboard shortcuts from a menu in the upper-right – including F11 for toggling full screen.

While gathering screenshots for this final draft, I had Windows crash while minimized in Boxes and Boxes out of focus. My mother’s VM fared a bit better through even Linux going to sleep from the lid closing – only having some audio distortions from the VM running at like double speed to catch up with the host system time, presumably. To prevent this from happening again, I’m enabling it to run in the background (6), but I’m also cutting its CPU core count to 4 (7).

SPICE

+1 for Express Install for including Windows SPICE Guest Tools. This section is rendered optional reading now.

Most guest operating systems aren’t designed to communicate with a host, so things like the clipboard (copy/paste) are separate. This makes it very challenging to move your own files on to and off of the VM. SPICE works as a two-part system with a server on the host and a client on the guest OS. Boxes comes with a SPICE server included, and to no one’s surprise, neither does Windows 7.

I got SPICE functionality on my express install. No copy-pasting files, but copy-pasting text in/out and drag and dropping files into the VM worked out of the box on an express install. Otherwise, I installed SPICE on my mother’s VM after finishing with Legacy Update and I had a browser able to download it from inside Windows.

Updating Windows

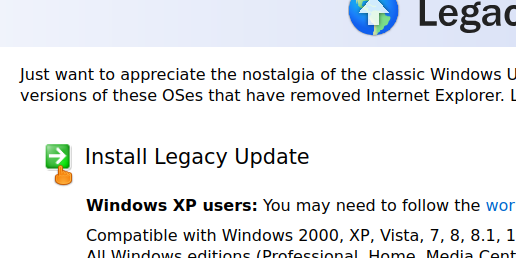

Microsoft no longer hosts the files for updating Windows, but Legacy Update is a community-run resource to restore various functionalities to old, sunset versions of Windows – most notably Windows Update[7]. My first time around, I installed that and spent a movie or two worth of time babysitting the VM. Download it from https://legacyupdate.net and put it on your Windows VM’s desktop. The download link looks like a header as pictured.

Run the installer. Legacy Update gives you a number of options. I’m keeping mine at default, but you can enable the Windows 7 Convenience Rollup Update if you don’t care about sending unnecessary telemetry being sent back to Microsoft in exchange for a faster install.

Legacy Update’s install wizard ends with a reboot. Open Legacy Update. You can now start the repetitive task of looking for updates, installing them, rebooting, and starting the cycle all over again until up to date. It should take two to four hours. That or you could open open Legacy Update and enable Automatic Updates. It points to the same setting from deep in Control Panel. From there, Windows should eventually take care of itself in the background if you leave it running for a week or few. My recommendation is to pop in a movie and babysit it – as I did with my mother’s VM. Just ignore the language packs you don’t need.

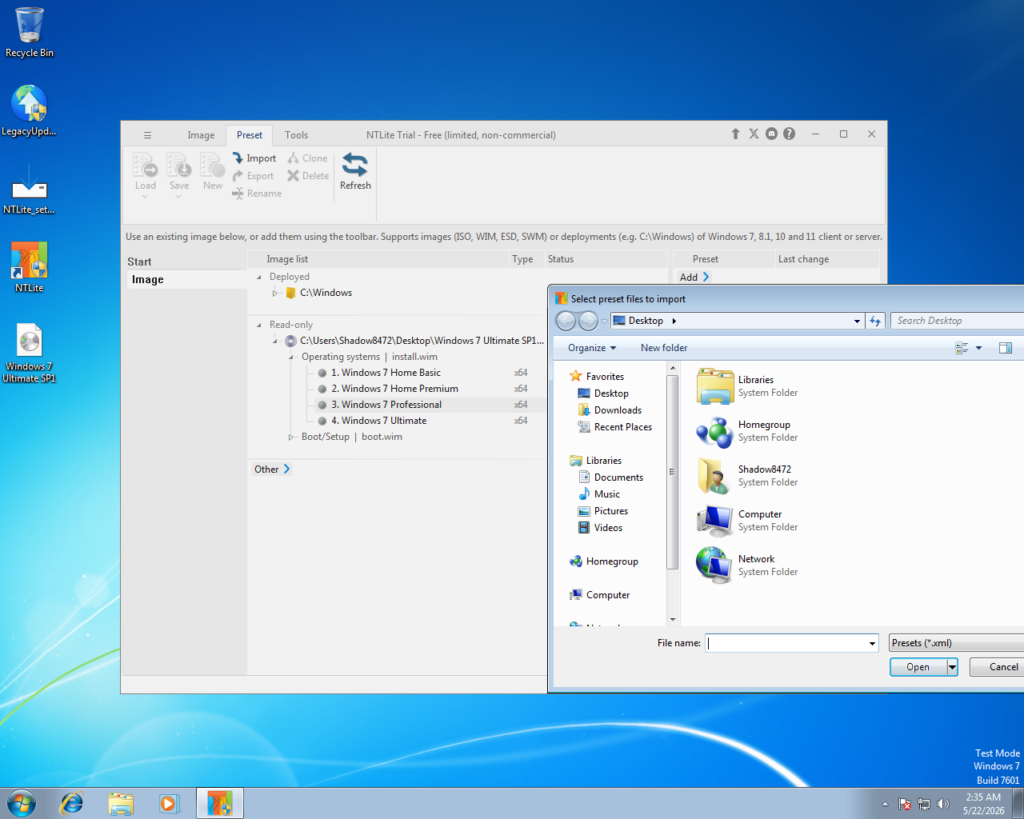

NTLite (Power Users)

Power Users Only for this section, I’m afraid. Due to time constraints and the procedure looking a hair too technical if it does work, I’m presenting this incomplete branch starting immediately after installing Legacy Update and rebooting a first time (Otherwise, NTLite won’t run).

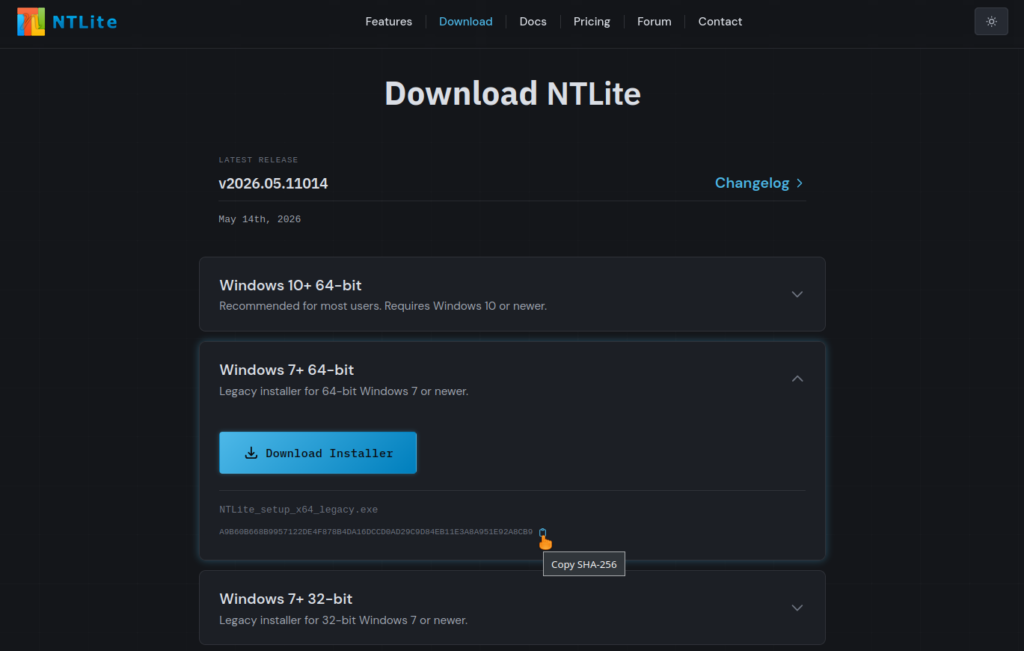

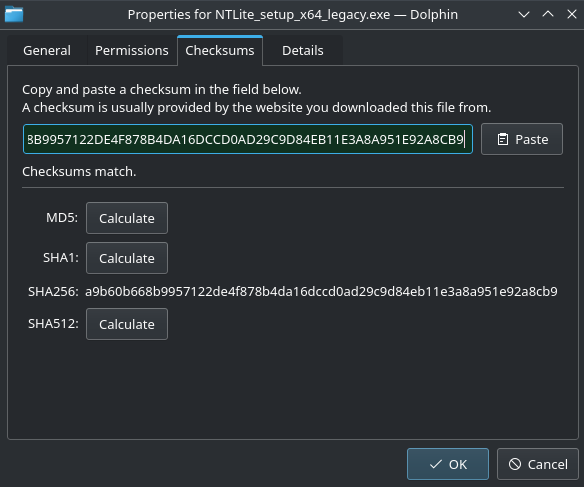

Sometime after finishing Round 1 I was reading up on improving privacy. I learned about NTLite, a powerful tool for modifying Windows [8]. Download it from https://ntlite.com/download/ and check the Shasum as you are able. (Pictured is a Shasum checker built into KDE’s Dolphin file manager.)

Here is where my research ran out of time. I was planning on having everyone use NTLite to inject all the updates into a custom Win7 disk image, then use that to “repair” Windows.

And so, dear power users, if you wish to explore this path further, be my guest.

Shared Folder

While Windows SPICE Guest Tools is included in Boxes’ Express Install, you additionally need spice-webdav –also written as “Spice WebDAV”– for easily moving files from the Windows VM back to the Linux host using shared directories/folders. If you’re following along with the manual install, go get SPICE tools now and install them.

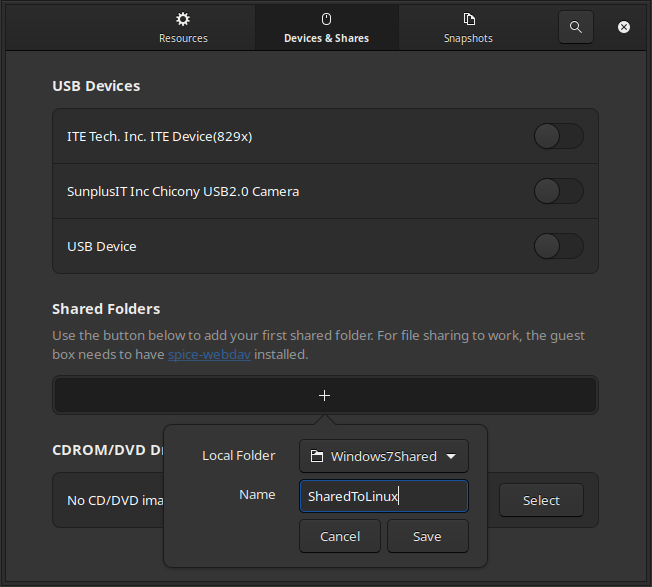

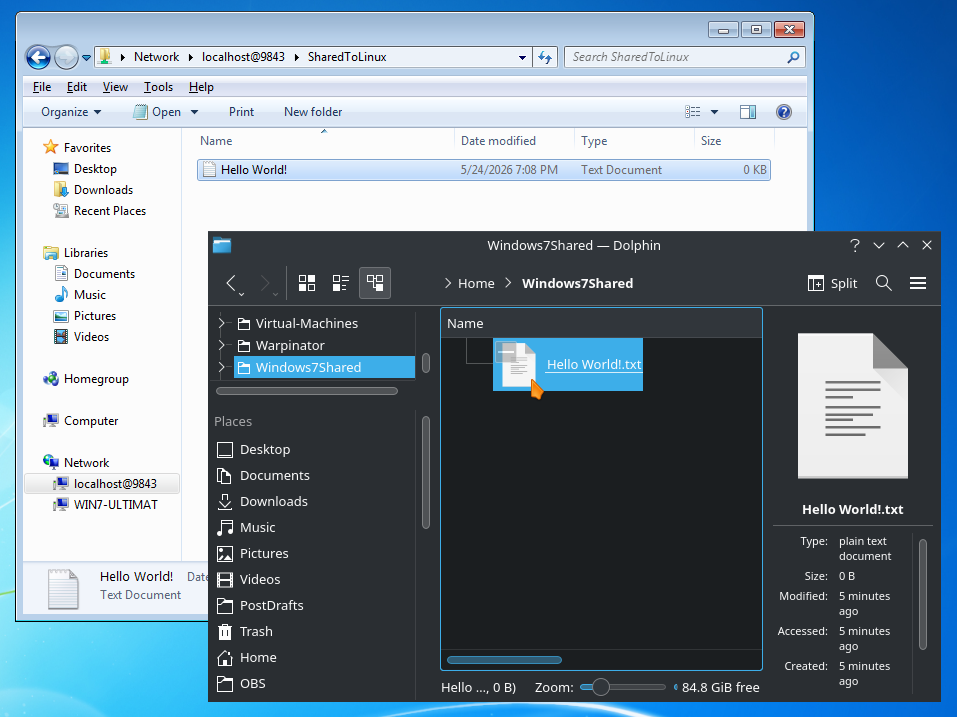

In Boxes, bring up your VM’s preferences and select Devices & Shares. Click the + button under Shared Folders. For demonstration purposes, I made a file on my host home directory as seen in the screenshot.

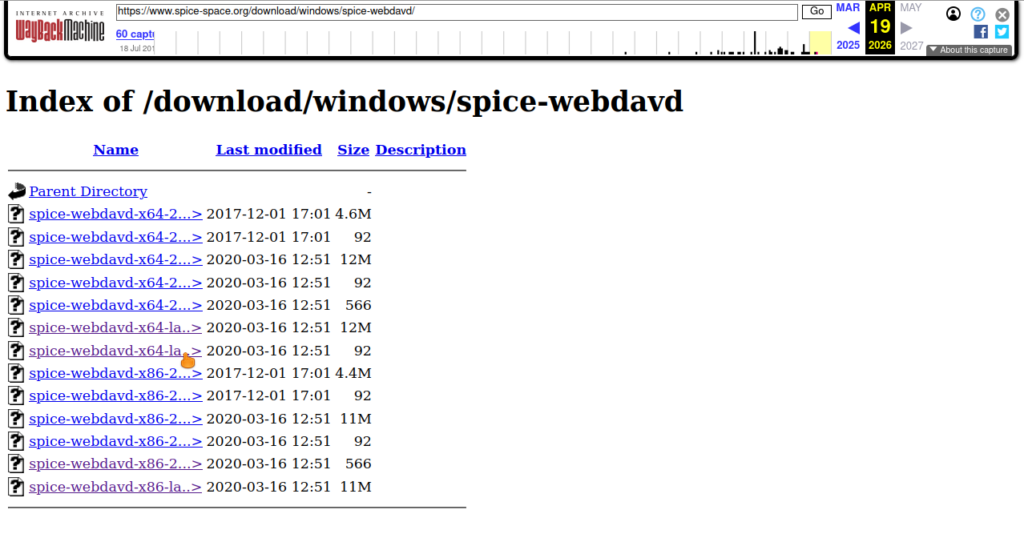

If you are viewing this shortly after publication, there may be a chance that the link from the screenshot (https://www.spice-space.org/download.html [9]) is still broken (isitdownrightnow.com reported a 4 day outage on Sunday, 5-24-2026 [10]). Fortunately, the WaybackMachine has the files. Note: site returned as I was doing citations [10].

Download the latest two links and use Shasum to verify your download against the number in the matching link, same as in the section “Verifying Your Download With Shasum” above. On the odd chance you are using a 32 bit copy of Windows 7, you may need to look up a guide on GPG (GNU Privacy Guard).

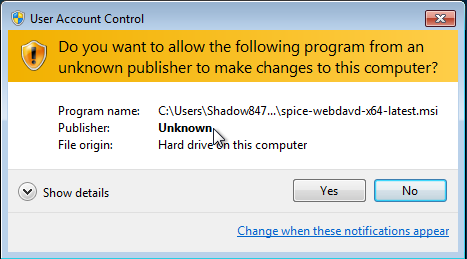

Move the installer to Windows 7’s desktop and run it. User Account Control will warn you of an unknown publisher, but we just took similar steps manually to the desired effect. In short: this software is not signed by Microsoft, but we’re trusting it anyway.

Once that runs, open File Explorer and navigate to Network, and your shared directory should show up automatically as a shared folder in Windows. Anything you put in there will show up in both places – a tunnel if you will between your host and guest OS’s.

Create Snapshot

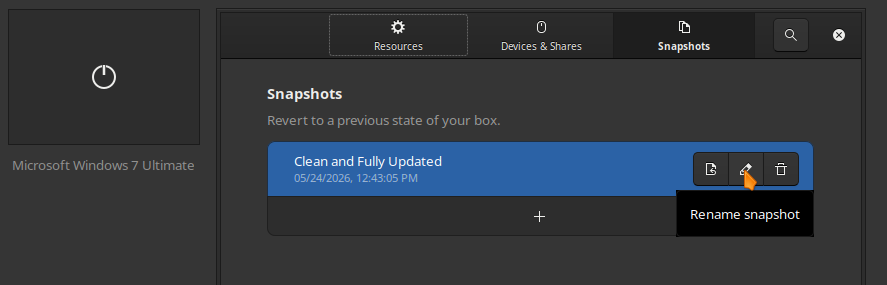

Once you are done setting up your Virtual Machine, open its preferences in Boxes, click on Snapshots at the top, and create a new snapshot.

Optional

This guide assumes most [former] Windows users know how to install a program, but now would be a good time to “move in.” Adjust the wallpaper, look up some guides to lose the “Test Mode” watermark if it bugs you. Delete your installer files off your desktop. Look up how to get Aero Glass working. All the fun things. But there is still a little more to consider doing.

Privacy

In 7’s later years, a certain number of 10’s telemetry features were “backported” to 7. Remember: most of your daily computing is done in Linux now; it can only report what it sees, but there exist guides and scripts to uninstall or block most known telemetry. One place to start looking is Blackbird [11], though the more you rip out or hobble, the greater chances you damage intended functionality. Remember to use snapshots as you tinker!

Virus Scanner

Conventional wisdom says to never run Windows without virus protection. After looking at available free options, I’d pick AVG because it still supports Windows 7 and hasn’t given an indication of stopping [12]. Feel free to make our own choices though.

On the other hand, by only running Windows for stubborn programs, you massively limit your attack surface. Worst case scenario, you load a snapshot from before you got infected. (You made another snapshot after installing everything, right?) Either way, remember to use common sense. A little goes a long way in terms of online safety. Don’t click on sketchy links.

Browser (For Manual Win7 Install)

After looking at my options for getting files from Linux into Windows on round 1, I settled on a de-Googled Chrome derivative called Supremium [13], though there are additional options if you look. It’s maintained for Windows 7 and still supports Manifest v2, where the modern Manifest v3 breaks several popular privacy/ad-block plugins.

Prayer

It may be a bit weird, but I’m trying to be a bit more proactive about inviting God into my work.

Father in Heaven,

Thank you for helping me get this out on time. Please watch over all my readers and grant clarity to the ones just learning about virtual machines. I know it’s confusing, but I’ve really tried to make it as beginner friendly as possible while still providing for the needs of more advanced students. Nurture their creativity, God.

In Jesus’ name I pray,

Amen.

Takeaway

I’ll give it to the UI (User Interface) artists, Windows 7 is beautiful, even without Aero Glass. Depending which critic you ask, Windows peaked at either XP, Vista (after it was fixed), or 7. My personal nostalgia lands with the twin peaks of XP and 7. XP took advantage of the rapidly increasing graphics capabilities, Vista reached too far, and 7 struck the balance a second time. Ever since then, the trend has been back toward flat colors. 7’s Aero theme was art – even the basic one I’ve been working with.

One more thing of note: I made an observation on my mother’s laptop that Windows seemed to quarter (or so) the expected battery life just by running.

Final Question

What would you have done differently in this guide? Do you have other favorite pieces of software you’d have used instead? Am I missing something important? Let me know in the comments below or reaching out to me on Discord (Invite to blog server).

Works Cited

[1]. Gamers Outlet, “Microfoft Windows 7 Ultimate Retail (Lifetime License / Global),” Gamers Outlet, [Online]. Available: https://www.gamers-outlet.net/en/buy-windows-7-ultimate-cd-key-microsoft-global. [Accessed: May 24, 2026].

[2]. C. Punishment, “The Truth About Cheap Software Keys and Where to Buy,” Major Geeks .com, Dec 21, 2024. [Online]. Available: https://www.majorgeeks.com/content/page/the_truth_about_cheap_software_keys_and_where_to_buy_them.html. [Accessed: May 24, 2026]

[3]. Sreenath and Abhishek Prakash, “How to Verify Checksum on Linux,” It’s Foss, Aug. 12, 2023. [Online]. Available: https://itsfoss.com/checksum-tools-guide-linux/. [Accessed: May 24, 2026].

[4]. Ifelsee, “windows 7 SHA1 List,” GitHub Gist, Nov. 7, 2021 [Revised Nov. 7, 2021]. [Online]. Available: https://gist.github.com/ifelsee/16219e4f927bf75fcb469c16e69b9476. [Accessed: May 24, 2026].

[5]. Jay, “GNOME Boxes Tutorial: Run Linux Vms the Easy Way,” YouTube.com, April 7, 2025. [Online]. Available: https://www.youtube.com/watch?v=tbhVLkVY-yE. [Accessed: May 24, 2026].

[6]. S. Brink, “How to Change the Display DPI Size in Windows 7 and Windows 8,” WindowsSevenForums, Feb 2010. [Online]. Available: https://www.sevenforums.com/tutorials/443-dpi-display-size-settings-change.html. [Accessed: May 24, 2026].

[7]. Legacy Update, “Welcome to Legacy Update,” legacyupdate.net, [Online]. Available: https://www.legacyupdate.net. [Accessed: May 24, 2026].

[8]. NTLite, “Automate, Secure, and Customize Windows, Locally,” ntlite.com, [Online]. Available: https://ntlite.com. [Accessed: May 24, 2026].

[9]. SPICE, “Download,” spice-space.org, [Online]. Available: https://www.spice-space.org/download.html [Accessed: May 24, 2026].

[10]. IsItDownRightNow?, “sp Spice-spacace.org Server Status Check” isitdownrightnow.com, [Online]. Available: https://www.isitdownrightnow.com/spice-space.org.html [Accessed: May 24, 2026].

[11]. Blackbird, “Windows privacy, security and performance,” getblackbird.net, Dec. 7, 2022. [Online]. Available: https://www.getblackbird.net/. [Accessed: May 24, 2026].

[12]. AVG Antivirus, “Protect your Windows 7 PC with our free, acclaimed antivirus,” 2026. [Online]. Available: https://www.avg.com/en-us/windows-7-antivirus. [Accessed: May 24, 2026].

[13]. win32ss, “Supermium,” github.com, May 9, 2023 [Revised May 10, 2026]. [Online]. Available: https://github.com/win32ss/supermium. [Accessed: May 24, 2026].